Big Compute Podcast : HPC and Diversity: Life lessons from Irene Qualters

In this Big Compute Podcast episode Gabriel Broner interviews Irene Qualters about her career and the evolution of HPC. Irene, an HPC pioneer, went from being a young, inexperienced female engineer working with Seymour Cray to becoming president of Cray Research. After 20 years at Cray Research she decided it was time for a change and went into the pharma space and eventually the National Science Foundation. She was awarded the 2018 HPCwire Readers’ Award for Outstanding Leadership in HPC.

Register to hear future Big Compute Podcast episodes live

Find the Big Compute Podcast on Apple podcasts.

Overview and Key Comments

Irene, can you tell us about your early days at Cray?

“I was really fortunate when I came to Cray because at that point it was a startup and at that time Seymour Cray was quite active. I don’t think any other person could have the breadth and depth of system design he had. Even though he didn’t like meetings, eight of us would meet with him, and he would go toe-to-toe with each of us to discuss each area and go as deep as was necessary. It gave me a holistic understanding of things at a detailed level and also how your work fits in the bigger landscape. That really stood out for me as a learning.”

How was your career impacted?

“It was early in my career, after graduate school. I came to a group of people who were mid-career and spanned every dimension of high performance computing. I was really motivated to work on challenging problems that involved mathematics and computing. I had the opportunity to work on the compiler, to take risks of my own, to travel internationally, and to get a deep sense of what was the potential for the work I was doing. I learned to appreciate how generally important that is. I wasn’t pushed but I was encouraged, and I was given every opportunity to develop, even though I was the youngest and one of the few females. I was fortunate they could take inexperienced people at the time. My suggestion for people coming out of school, is to try to situate yourself in a place where you can be exposed to many different things which can really be career forming as it was for me.”

What was the focus of your work?

“We worked on the first commercially successful auto-parallelizing compiler. That was a wonderful experience. The theory about dependency analysis was being developed in academia. In order to implement a practical product we had to keep abreast of that work but use a lot of practical heuristics. That learning became invaluable.”

Was auto-parallelization a key reason for Cray’s success?

“We had Moore’s law at our back. Chip designs were shrinking and one was able to ride that curve by staying upfront. We would parallelize and boost the performance 10 times, while staying at the edge of that curve helped us increase performance even without parallelization. That was a very important attribute that meant that people would start faster and only improve over time.”

Anything about the customers?

“The relationships I formed with customers hold till today. It was a really unique time. Those relationships formed the basis of what the HPC community is today. The bond was formed trying to achieve together things that weren’t possible before.”

Why did you choose to go to Merck after Cray?

“I had decided I wanted to make a change after 20 years. I had strong affinity with the application space of High Performance Computing. I had seen it in many different ways and fields. I wanted to stay in industry. I liked the focus. It just resonated with me. What drew me to Merck was that I had seen what had happened to the realm of physics in the 20th century, and the rich role computation played in the physical sciences, and I thought the pharmaceutical science was poised to make the transition in this century. I knew it was early stages, but I saw an opportunity at Merck where I could have a role in that transformation that was really based on computational capabilities. I was imagining the elimination of animal trials because one could deepen the knowledge of biological and chemical systems to predict the outcomes without animal testing, much like replacing wind tunnels with computational models. That is still at the frontiers: simulations are evolving, progress is being made, but we are not there yet. I enjoyed the time, and saw the field of genetics start to arise. It was really rewarding. What caused me to leave is that I started to miss computer science. That caused me to go back to areas that will bring me a little bit closer.”

Can you tell us about your time at NSF?

“I wanted to find a role where I can be of service to the community I felt deep loyalty to. NSF focuses on supporting largely the academic community. I found it to be a rich environment. It took me again to the mediation between where the technology is going, where is the frontier of different disciplines, how they can use a merge of technologies. It was quite a rich space.”

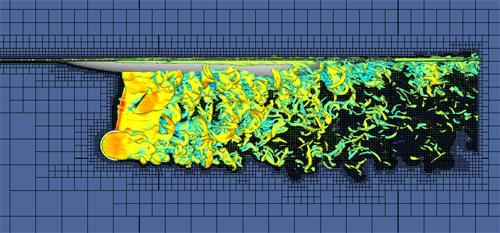

“We supported researchers who were advancing science, universities who were innovating in computing, and international collaborations. It was really their successes. It was very enriching. We funded work at the University of Illinois that enabled the simulations of the capsid that protects the HIV virus, which can open the doors to develop new drugs. We also funded an international group of researchers looking to detect evidence of gravitational waves from data coming from interferometers, and match the data with simulations of black holes colliding. Not work that I did, but played a small piece bi supporting others to acquire systems.”

What advice do you have for the next generation of leaders?

“A couple of things I would say may sound trite. At moments at Cray that I can think of, and here at Los Alamos, some of the most profound advances that I have seen come from groups of people that have very different perspectives, very different ideas. I am really a firm believer in diversity in all its aspects, and by that I mean ways of thinking, very different perspectives, and you get that if you bring people in from different backgrounds and cultures that really can represent quite unique ways of looking at things. Also multi-generation, early career, mid-career, as well as more mature career people. In order to get that mix of ideas that really advance either technologies or fields. One can see that happening in quantum research. Being able to work in that environment, and being able to lead in an environment where people have different points of views, and disagree, enables us to take a field in a different direction, a direction that no one could do on their own. We need the next generation to be as diverse as it can be, in the US and internationally. We have to struggle to collectively bring our different disciplines on our world’s hardest problems, on the world’s most challenging problems.”

Irene Qualters

Irene Qualters currently serves as Associate Laboratory Director for Simulation and Computation at Los Alamos National Lab. She previously served as a Senior Science Advisor in the Computing and Information Science and Engineering (CISE) Directorate of the National Science Foundation (NSF), where she contributes to strategic leadership in new directions for the CISE Directorate. In her nearly nine years at NSF, she has had responsibility for developing NSF’s vision and portfolio of investments in high performance computing, and has played a leadership role in interagency, industry, and academic engagements to advance computing. Irene also served on the Science and Technology Committee of the LLNS/LANS Board of Governors. Prior to her NSF career, Irene had a distinguished 30-year career in industry, with a number of executive leadership positions in research and development in the technology sector. During her 20 years at Cray Research, she was a pioneer in the development of high performance parallel processing technologies to accelerate scientific discovery. Subsequently as Vice President, she led Information Systems for Merck Research Labs, focusing on international cyberinfrastructure to advance all phases of pharmaceutical R&D. Irene has a M.S. in Computer Science from the University of Detroit and a B.S. from Duquesne University.

Gabriel Broner

Gabriel Broner is VP & GM of HPC at Rescale. Prior to joining Rescale in July 2017, Gabriel spent 25 years in the industry as OS architect at Cray, GM at Microsoft, Head of Innovation at Ericsson, and VP & GM of HPC at SGI/HPE.