Separation Anxiety: I’m Away From My Cluster

Distractions, noise, kids, interruptions. All part of the modern experience it would seem. Here at Rescale, like others, we have been stuck working from home for 67 days and counting. There are a million reasons it might be challenging. However, we feel strongly that access to your cluster and the software licenses you need to do your job, should not be one of them.

Unfortunately, this seems to be the reality for most companies. In fact, according to Accenture, only 10% of companies have comprehensive plans and resources for situations like this. The reality is that the rest of us are stuck with a cobbled-together distributed work environment. This comes with numerous pains: Software licenses tied to workstations we can’t get to, VPNs that were only designed to support 10-15% of our workforce, slow network interconnect speeds, broken engineering workflows, underpowered home computing, and more.

Unfortunately, this generally adds up to a widespread drop in productivity. In challenging macroeconomic conditions, most companies simply cannot afford this drop. These pressures add up to a dire situation. Without a solution for distributed teams working efficiently, the company risks entering a downward spiral.

The infrastructure that was built to enable the next generation of science, engineering, and technology was built on the assumption that it would largely be done from the office. So what does the next generation of infrastructure look like? How are companies investing to enable the new environment?

Beefing up our VPN

Many companies already have a VPN designed to allow people remote access to their cluster, or sometimes even virtual desktops and more. The problem? Most companies have configured their VPN to handle a very small portion of their company’s workforce at one time.

A number of companies we have talked to have made a plan to roll out improvements to their VPNs — adding support for hundreds of concurrent connections, improving network I/O, and more. However, a multi-layered process like this takes time. So in the meantime, we see IT teams assigning specific scheduled hours of when groups are allowed to access the VPN.

“I have from 9-9:30 AM and 1-1:30 PM to access my cluster, download or upload whatever data I need, or submit a job. If I miss that window I have to wait another day to get to the next step. It’s a huge waste of time,” said one engineer we spoke with.

This might be a strategy for solving this problem one brick at a time, but it will be months before the average engineer sees an improvement in the way they work.

Send our compute-intensive jobs to a third party

Companies feeling the pressure knowing they can’t rapidly improve access to network speeds fast enough to maintain productivity have turned to another option: outsourcing.

These companies take advantage of independent firms whose data centers have better access and ask them to complete simulation and other high compute workloads for them. When the third party has the ability to run a simulation on the exact right software, version, and has the available capacity, this can be a viable option for running jobs when you simply can’t do so within your own infrastructure.

However, working with a separate environment does present some risks. IT teams commonly run into issues when they manage multiple environments without strict and visible controls.

One of our customers told us this story about working with two environments: “The two sites ran simulations on different versions of the same software. Each one had a slightly different calculation for the stretch of a cable. When it came time to assemble our final plane, all the cables from the two versions were about six inches too short. This delayed our project by a year.”

One of our customers told us this story about working with two environments: “The two sites ran simulations on different versions of the same software. Each one had a slightly different calculation for the stretch of a cable. When it came time to assemble our final plane, all the cables from the two versions were about six inches too short. This delayed our project by a year.”

Outsourcing makes sense with a trusted partner and super clear visibility, but in our experience, managing an environment through a person is fertile ground for mistakes that can add up to big delays.

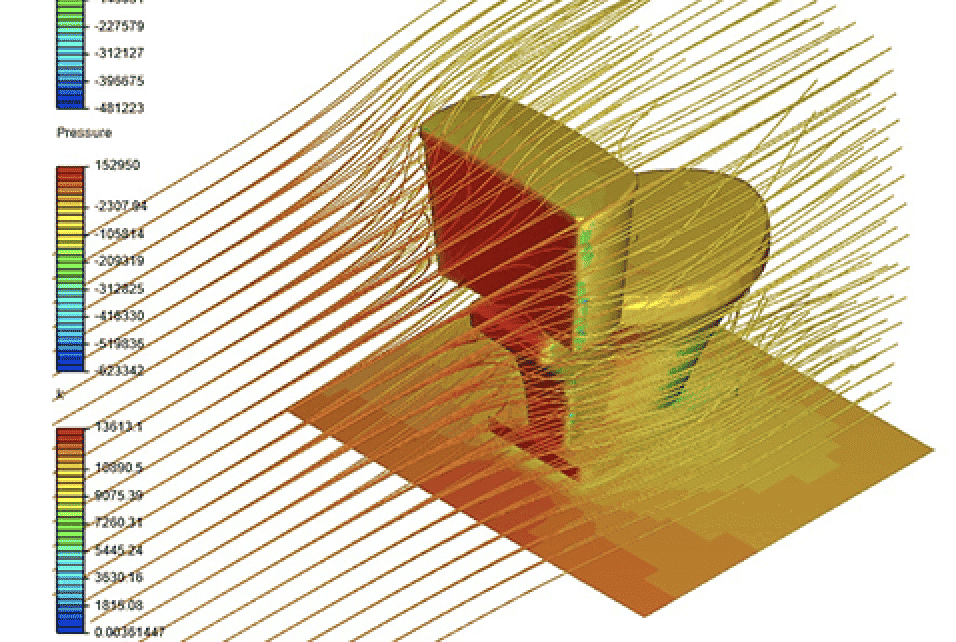

Deploy a cluster in the public cloud

Knowing the timelines, and risks of the aforementioned solutions, some companies we have talked to have opted to move certain workloads to public cloud infrastructure. This option offers flexibility in compute scale, specialized hardware, and connectivity. Essentially, companies have the opportunity to create as many resources as their teams require. The ability to scale is built-in and delivered on demand.

The main challenges companies face when implementing a public cloud instance are operational in nature. This solution is entirely dependent on IT, systems integrators, and more. The design and management of this is usually a larger undertaking than expected.

A quality cloud deployment needs to be able to control, report, and manage individual budgets for teams and projects. It needs to address license hosting and allocation of jobs. It needs to be smart about choosing ideal hardware for any given job to ensure maximizing resources. It needs to intelligently kill clusters when they are not needed. It needs to be built with limits so IT doesn’t get a surprise bill at the end of the quarter.

Even when a system addresses all these issues, it sometimes can’t handle specific types of information because of regulatory requirements. The system is not ITAR, FedRAMP, or even SOC II compliant, which makes it a non-option for certain sensitive workloads.

Investing in a managed platform

Managed platforms allow companies to gain the benefits of a cloud practice as they need to, while solving for all the operational and compliance issues that are present. These systems aren’t really built for the purpose of enabling distributed teams, but they do represent the only systems that were built agnostic to the distribution of teams.

By their very nature, being built on cloud resources, access is inherently remote, making them robust to situations like we find ourselves in today, and making them equally useful when things return to whatever the new normal is.

Specifically, Rescale provides some unique approaches to the challenges that companies face today:

- Budget management – Rescale is built for teams to be able to self serve with resources in the cloud. This means it is tailored to allow scale on demand. In order for this to work practically in an organization, it also needs to have the ability for administrators to set specific hard and soft budgets, allocate spend, and even split billing in some cases. This is native functionality on Rescale, and resource allocation is as easy as a few clicks.

- Access management – Teams need to be able to access the cluster and data stored on the system. Rescale’s centralized management of user access allows for a secure and controlled way to give people access to the resources they need with ease.

- Software license management – With options to bring your own existing licenses, or purchase new licenses, even in some cases on-demand licenses, Rescale offers a variety of ways to help your team get up and running, no matter your current situation. Over 2,000 software versions are already available and installed, just one license key away from running jobs. The system also allows centralized control of versions to avoid mismatching versions between environments.

- Security and data management – Rescale is the most secure option when it comes to cloud HPC. As ITAR, FedRAMP, and SOC II compliant, Rescale is built with solid security from day one. Its system uses state-of-the-art encryption and data management protections. Additionally, Rescale gives control to admins to ensure users only have permissions to the data to which they are supposed to have access.

- Architecture management – Rescale has developed a system that not only can spin up and kill clusters on every public cloud, but it has the ability to recommend the best hardware, regardless of location. Rescale knows the speed and robustness for every core type in the system and can guide users to the hardware type that provides the best cost, performance, and scalability that they need — Millions of cores, hundreds of core types, intelligently mapped to customer needs.

2020 will forever be the year that our systems and teams were put to the ultimate test. All future discussion around systems will be required to be pandemic-proof to ensure that technology empowers its users and doesn’t restrict them. In what ways do you think HPC will change in the wake of COVID-19?

Looking for ways to optimize remote teams? Join our webinar to learn more and hear applicable solutions from actual engineers. Here are the details.